Do an experiment: keep Task Manager or any other CPU activity monitoring program up on your screen for a few hour or days, glancing at it every so often. Do you see it EVER above zero (other than momentary spikes)?

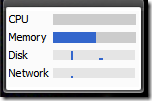

Here’s mine, from a Google sidebar gadget:

I’ve got a Dual Core and 2GB RAM. Currently I have open two copies of Visual Studio 2005, Word 2003, Outlook 2007, Paint.Net, RSS Bandit, Adobe Reader, IE, MSDN help, Windows Live Messenger, and Google Deskbar.

So that’s using just over 1 GB of RAM. And ZERO CPU. I’m watching this. The CPU meter goes up a little when I type, open a new program, compile my source code, etc., but most of the time it’s zero, even when I think I’m actually working.

I used to eschew running apps like Google Deskbar, wallpaper helpers like Display Fusion, or other system utilities that continually run. But I had a realization–it doesn’t matter! I could run many more utilities concurrently and still not come anywhere close to creating a slowdown on my computer.

Of course, I’m only talking about non-interfering/non-processor-intensive programs. This immediately excludes anti-virus programs, which interrupt every process to examine system behavior continually, or running video compression (duh) in the background.

But things like desktop searching, system monitoring (if it’s not too intrusive), utilities, and any other independent process–yeah, just throw them on. They won’t make a dent.

They key word in that last paragraph is independent. Independent means they don’t depend on or interfere with other processes.

Technorati Tags: processing power,system resources,CPU,Memory,Utilities