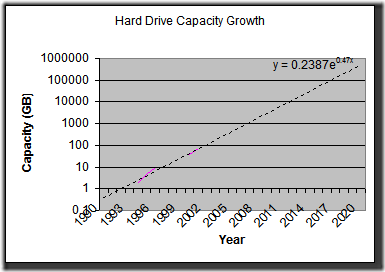

Anybody who’s taken high school or college mathematics know how phenomenal exponential growth is. Even if the exponent is very, very small, it eventually adds up. With that in mind, look at this quick-and-dirty chart I made in Excel, plotting the growth in hard drive capacity over the years. [source: http://www.pcguide.com/ref/hdd/hist-c.html]

Ok. it’s ugly, but notice a few things:

- The pink denotes the data points from the source data or what I put in (I added 1000 GB in 2007).

- The scale is logarithmic, not linear. Each y-axis gridline represents a ten-fold increase in capacity.

- At the current rate of growth, by 2020, we’ll have 1,000,000 GB hard drives. That’s 1 petabyte (1PB). (by the way, petabyte is not in Live Writer’s spelling dictionary–get with the times Microsoft!)

- The formula, as calculated by Excel, says that the drive capacity should double roughly every 2 years.

Also, this doesn’t really take into account multiple-hard drive storage schemes like NAS, RAID, etc. Right now, it’s quite easy to lash individual storage units together into packages such as those for more space, redundancy, etc. I’ll ignore that ability for now.

So 2020: that’s 12 years from now. We can expect to have a petabyte in our computers. That’s a LOT of space. Imagine the amount of data that can be stored. How about every book ever written? How about all your music, high-def DVDs, ripped with no lossy compression?

Tools such as Live Desktop and Google Desktop take on a whole new level of importance when faced with the task of cataloging petabytes of information on your home PC. Because, let’s face it, you’ll never delete anything. You’ll take thousands of pictures with your digital camera and never delete any of them. You’ll take hours of high-def footage and never watch or edit them, but you’ll want to find something in them (with automated voice recognition and image analysis, of course). Every e-mail you get over your entire lifetime can be permanently archived.

What if you could get a catalog of every song ever recorded? That would probably require more than a few petabytes, even compressed, but we’re heading that way. I don’t think the amount of music in the world is increasing exponentially, is it? Applications like iTunes and Window Media Player, not to mention things like iPods, would have to have a critically-designed interface to handle the organization and searching for desired music. I think Windows Media Player 11 is incredible, but I don’t think it could handle more than about 100,000 songs without choking–has anyone approached any practical limits with it?

What about the total information in the world–that probably is increasing exponentially. Will we eventually have enough storage so that everyone can have their own local, easily searchable copy of the vast sum of human knowledge and experience? (Ignoring the question of why we would want to)

Let’s extrapolate this growth out 100 years to the year 2100. I won’t show the graph, but it approaches 1E+20 GB by the year 2100.

How do the economics of digital goods change when you can have an infinite number of them? It’s the opposite of real estate, an ever-diminishing good.

On my home PC, for the first time, I do have a lot of storage that isn’t being used. I have about 1 TB of storage, and about 300 GB free. I suppose I could rip all my DVDs, rip all my music at lossless compression (it’s currently all WMA / 192Kbps).

The rules of the game can change quickly when that much storage is available. It will be interesting to see what happens in the coming decades. Of course, all this discussion is completely ignoring the increasingly connected, networked world we live in.

del.icio.us Tags: hard drive,storage,infinity,searching